What Is an AI Kiss Video Generator

Explore what an ai kiss video generator is, how it works, ethical and legal considerations, and best practices for responsible use. Learn key concepts, safeguards, and practical steps for creators.

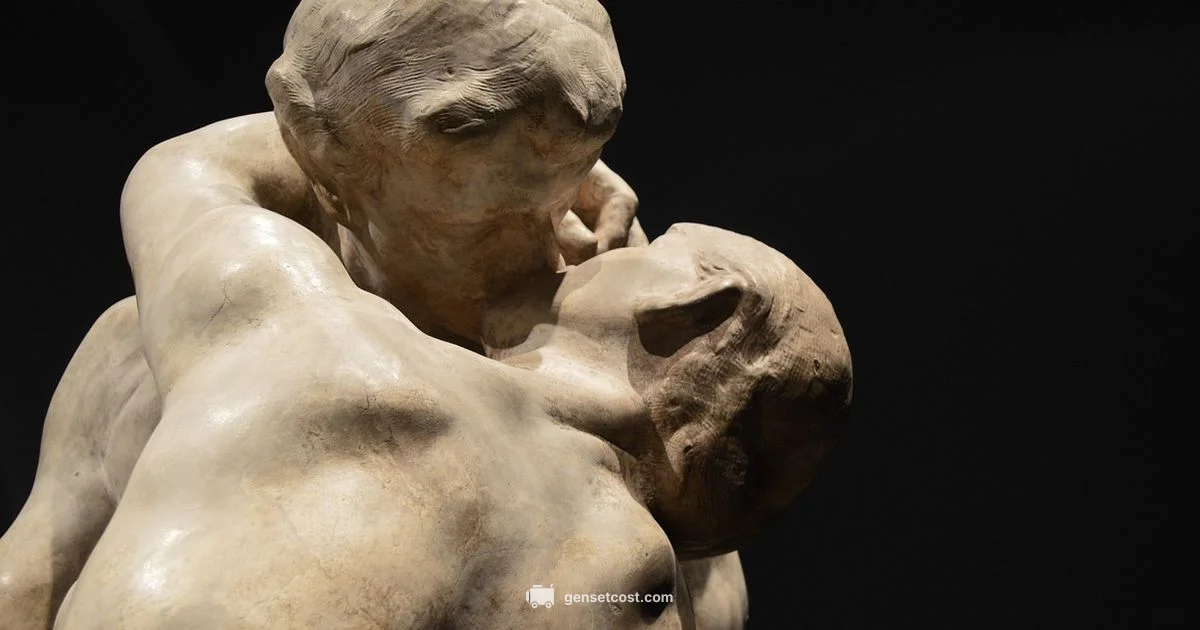

An ai kiss video generator is an AI tool that creates or edits video scenes depicting a kiss using generative models such as GANs or diffusion networks.

What an AI Kiss Video Generator Is

According to Genset Cost, an ai kiss video generator is an AI tool that creates or edits video scenes depicting a kiss using generative models such as GANs or diffusion networks. These tools can synthesize facial movements, expressions, and lighting to produce believable sequences, or apply a specific artistic style to an existing clip. They sit within the broader category of synthetic media, which includes deepfake techniques and video reenactment. Users typically input prompts, reference footage, or audio cues, and the model outputs a new sequence that aligns with the desired mood, setting, or motion. Because the output can resemble real people, responsible use and clear disclosure are essential for trust and safety in digital media creation.

How These Tools Work Under the Hood

Most ai kiss video generators rely on two core approaches: diffusion models and generative adversarial networks. Diffusion models gradually refine noise into coherent frames, guided by text prompts or example videos. GAN based systems use a generator and a discriminator to push realism, iterating through multiple passes to improve texture, hair, and fabric details. Modern pipelines may combine diffusion with motion capture signals, audio lip-sync conditioning, and identity preservation modules to maintain character likeness. Training data is a critical factor; models learn from large corpora of video frames, yet creators must balance dataset breadth with privacy and consent considerations. Hardware acceleration, such as GPUs or TPUs, significantly impacts speed and cost, especially for high resolution outputs.

Ethical and Legal Considerations

The ethical terrain around ai kiss video generators is complex. Consent from all depicted individuals is essential, particularly when real people are represented or when recognizable features are used. Laws regarding deepfakes vary by jurisdiction but often address deception, copyright, and right of publicity. Platform policies frequently require disclosure and prohibit impersonation without consent. Watermarking, clear labeling, and audit trails help combat misuse. Respecting privacy and avoiding exploitation of vulnerable groups are foundational to responsible practice. For educators and creators, balancing artistic expression with transparency and consent is key.

Common Use Cases and Scenarios

Creatives use ai kiss video generators for concept testing, music videos, animated shorts, and satire where consent and legality are observed. In film and advertising, they can prototype scenes quickly before shooting, saving time and resources. Educational contexts may employ synthetic scenes to illustrate animation techniques or storytelling without involving real individuals. Prosocial applications include choreography planning, visual effects replacement, and archival restoration where ethical guidelines are followed. Adopters should avoid disseminating misleading content that could harm someone’s reputation or violate rights.

Quality Factors and Limitations

Output quality depends on model capacity, dataset quality, and prompt clarity. Common strengths include realistic lip-sync and natural lighting under controlled prompts. Limitations frequently arise in extreme lighting, complex backgrounds, or subtle facial micro-expressions, which can produce artifacts, uncanny color shifts, or unstable frames. Resolution and frame-rate play a major role in perceived realism; higher specs demand more compute and time. Users must assess artifact patterns, motion continuity, and identity consistency, recognizing that synthetic outputs may not perfectly replicate real individuals or scenes.

Safety, Safeguards, and Best Practices

Responsible use begins with consent, disclosure, and opt-in notices. Always obtain written permission from any real subjects and respect age and privacy laws. Watermark original outputs and include a visible disclaimer when publishing synthetic content. Use purpose-built tools that offer provenance features, version control, and rollback capabilities. Maintain a transparent workflow by documenting prompts, model versions, and training data considerations. The Genset Cost team recommends embedding privacy by design and avoiding targeted manipulation that could mislead viewers.

Comparisons of Popular Tools and Technologies

Tools vary in their core algorithms, ease of use, and output controls. Diffusion models tend to offer high realism with more flexible style control, while GAN based systems can excel at identity preservation under tighter prompts. Open source options often provide deeper customization but require technical expertise, whereas commercial platforms may offer user-friendly interfaces and support. When choosing a tool, weigh factors such as licensing, watermark policies, data handling, and community guidelines. Genset Cost analysis notes that decision-makers should prioritize transparency and consent over sheer capability when evaluating tools.

Practical Workflow for Content Creators

Begin with a clear brief that defines the scene, characters, style, and consent requirements. Gather reference footage or prompts, decide on a model type, and run iterative generations to refine lip-sync, lighting, and motion. Post-process outputs with color grading and stabilization as needed, and always annotate the final product with disclosure and licensing information. Maintain records of the prompts and model versions used, to support accountability and future revisions. The workflow should integrate safety checks, including content moderation steps and verification against platform policies.

The Future of AI Kiss Video Generators and Responsible Innovation

As synthetic media technologies evolve, expectations grow for better controllability, higher fidelity, and stronger governance. Advances may yield improved identity controls, stronger consent workflows, and built-in content safeguards. Regulators and platforms are likely to tighten disclosure requirements and origin verification. Ongoing research into ethics, fairness, and abuse prevention will shape how creative teams deploy these tools, ensuring responsible storytelling while protecting individuals from harm. The Genset Cost team suggests staying informed about policy developments and maintaining a culture of transparency.

People Also Ask

What is an AI kiss video generator?

An AI kiss video generator is a tool that uses artificial intelligence to create or modify video scenes depicting a kiss. It relies on generative models to synthesize facial movement, lighting, and timing. These tools fall under synthetic media and require ethical use and clear disclosure.

An AI kiss video generator uses AI to create or alter a kiss scene in video form. It relies on generative models and should be used with consent and disclosure to avoid harm.

Is it legal to create deepfake kiss videos?

Legal considerations vary by jurisdiction and context. In many places, the depiction of real individuals without consent may violate rights or laws. Always secure consent, use disclaimers, and follow platform policies to minimize legal risk.

Laws differ by location, but consent and disclosure are generally essential to stay on the safe side.

Do I need consent from people depicted in the video?

Yes. Obtaining explicit, informed consent from anyone who is identifiable in the video is a fundamental ethical and often legal requirement. For non-consenting subjects, avoid creating or publishing the content.

Yes. Get clear consent from anyone identifiable before creating or sharing such content.

What are common limitations of AI kiss video generators?

Limitations include imperfect lip-sync, artifacts in background lighting, and difficulty maintaining identity across frames. High resolution outputs require substantial compute, and models may produce unintended biases if trained on biased data.

They can struggle with perfect lip-sync and consistent identity; high quality results need powerful hardware and careful prompts.

How can I use these tools responsibly?

Seek explicit consent, disclose synthetic nature, watermark outputs, and avoid misrepresenting real people. Follow applicable laws and platform rules, and keep a clear audit trail of data and prompts used.

Use consent, disclose that it is synthetic, and follow platform rules for responsible use.

What should I look for when choosing a tool?

Look for clear licensing, built-in safeguards, watermark options, provenance features, and robust support. Consider the quality of lip-sync, artifact handling, and your own technical comfort level.

Check licensing, safeguards, and support, and test for lip-sync quality before committing.

Key Takeaways

- Understand the core concept and how AI kiss video generators function

- Prioritize consent, privacy, and legal compliance in every project

- Assess output quality and be mindful of artifacts and bias

- Choose tools with transparent licensing, watermarks, and provenance

- Follow platform rules and include clear content disclosures

- Adopt a responsible workflow with documentation and safety checks